数据挖掘决策树算法Java实现.rtf资料文档下载

《数据挖掘决策树算法Java实现.rtf资料文档下载》由会员分享,可在线阅读,更多相关《数据挖掘决策树算法Java实现.rtf资料文档下载(10页珍藏版)》请在冰点文库上搜索。

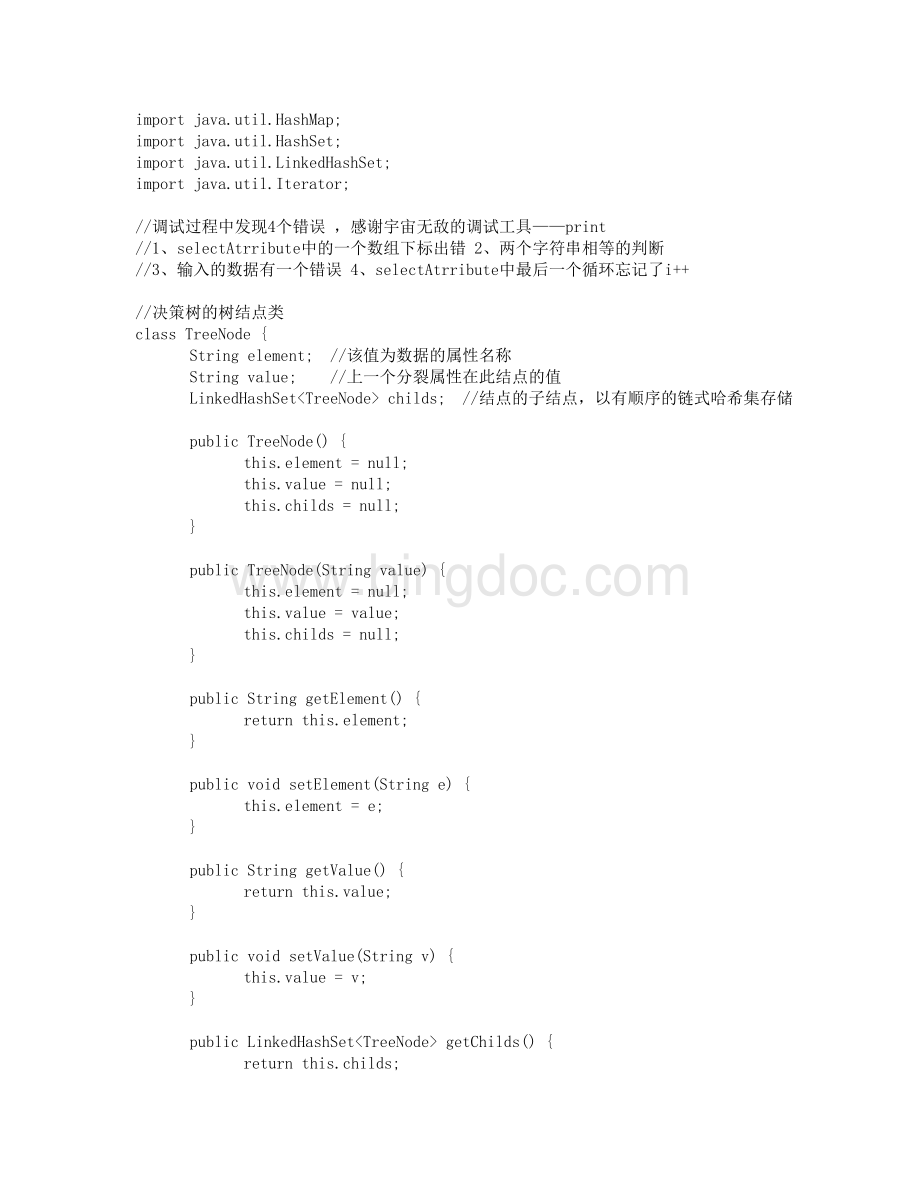

publicLinkedHashSetgetChilds()returnthis.childs;

publicvoidsetChilds(LinkedHashSetchilds)this.childs=childs;

/决策树类classDecisionTreeTreeNoderoot;

/决策树的树根结点publicDecisionTree()root=newTreeNode();

publicDecisionTree(TreeNoderoot)this.root=root;

publicTreeNodegetRoot()returnroot;

publicvoidsetRoot(TreeNoderoot)this.root=root;

publicStringselectAtrribute(TreeNodenode,StringdeData,booleanflags,LinkedHashSetatrributes,HashMapattrIndexMap)/Gain数组存放当前结点未分类属性的Gain值doubleGain=newdoubleatrributes.size();

/每条数据中归类的下标,为每条数据的最后一个值intclass_index=deData0.length-1;

/属性名,该结点在该属性上进行分类Stringreturn_atrribute=null;

/计算每个未分类属性的Gain值intcount=0;

/计算到第几个属性for(Stringatrribute:

atrributes)/该属性有多少个值,该属性有多少个分类intvalues_count,class_count;

/属性值对应的下标intindex=attrIndexMap.get(atrribute);

/存放属性的各个值和分类值LinkedHashSetvalues=newLinkedHashSet();

LinkedHashSetclasses=newLinkedHashSet();

for(inti=0;

ideData.length;

i+)if(flagsi=true)values.add(deDataiindex);

classes.add(deDataiclass_index);

values_count=values.size();

class_count=classes.size();

intvalues_vector=newintvalues_count*class_count;

intclass_vector=newintclass_count;

i+)if(flagsi=true)intj=0;

for(Stringv:

values)if(deDataiindex.equals(v)break;

elsej+;

intk=0;

for(Stringc:

classes)if(deDataiclass_index.equals(c)break;

elsek+;

values_vectorj*class_count+k+;

class_vectork+;

/*/输出各项统计值for(inti=0;

ivalues_count*class_count;

i+)System.out.print(values_vectori+);

System.out.println();

iclass_count;

i+)System.out.print(class_vectori+);

*/计算InforDdoubleInfoD=0.0;

doubleclass_total=0.0;

iclass_vector.length;

i+)class_total+=class_vectori;

i+)if(class_vectori=0)continue;

elsedoubled=Math.log(class_vectori/class_total)/Math.log(2.0)*class_vectori/class_total;

InfoD=InfoD-d;

/计算InfoAdoubleInfoA=0.0;

ivalues_count;

i+)doublemiddle=0.0;

doubleattr_count=0.0;

for(intj=0;

jclass_count;

j+)attr_count+=values_vectori*class_count+j;

jmax)max=Gaini;

return_atrribute=atrribute;

i+;

returnreturn_atrribute;

/node:

在当前结点构造决策树/deData:

数据集/flags:

指示在当前结点构造决策树时哪些数据是需要的/attributes:

未分类的属性集/attrIndexMap:

属性与对应数据下标publicvoidbuildDecisionTree(TreeNodenode,StringdeData,booleanflags,LinkedHashSetattributes,HashMapattrIndexMap)/如果待分类属性已空if(attributes.isEmpty()=true)/从数据集中选择多数类,遍历符合条件的所有数据HashMapclassMap=newHashMap();

intclassIndex=deData0.length-1;

i+)if(flagsi=true)if(classMap.containsKey(deDataiclassIndex)intcount=classMap.get(deDataiclassIndex);

classMap.put(deDataiclassIndex,count+1);

elseclassMap.put(deDataiclassIndex,1);

/选择多数类StringmostClass=null;

intmostCount=0;

Iteratorit=classMap.keySet().iterator();

while(it.hasNext()StringstrClass=(String)it.next();

if(classMap.get(strClass)mostCount)mostClass=strClass;

mostCount=classMap.get(strClass);

/对结点进行赋值,该结点为叶结点node.setElement(mostClass);

node.setChilds(null);

System.out.println(yezhi:

+node.getElement()+:

+node.getValue();

return;

/如果待分类数据全都属于一个类intclass_index=deData0.length-1;

Stringclass_name=null;

HashSetclassSet=newHashSet();

i+)if(flagsi=true)class_name=deDataiclass_index;

classSet.add(class_name);

/则该结点为叶结点,设置有关值,然后返回if(classSet.size()=1)node.setElement(class_name);

System.out.println(leaf:

/给定的分枝没有元组,是不是有这种情况?

/选择一个分类属性Stringattribute=selectAtrribute(node,deData,flags,attributes,attrIndexMap);

/设置分裂结点的值node.setElement(attribute);

/System.out.println(attribute);

if(node=root)System.out.println(root:

elseSystem.out.println(branch:

/生成和设置各个子结点intattrIndex=attrIndexMap.get(attribute);

LinkedHashSetattrValues=newLinkedHashSet();

i+)if(flagsi=true)attrValues.add(deDataiattrIndex);

LinkedHashSetchilds=newLinkedHashSet();

for(StringattrValue:

attrValues)TreeNodetn=newTreeNode(attrValue);

childs.add(tn);

node.setChilds(childs);

/在候选分类属性中删除当前属性attributes.remove(attribute);

/在各个子结点上递归调用本函数if(childs.isEmpty()!

=true)for(TreeNodechild:

childs)/设置子结点待分类的数据集booleannewFlags=newbooleandeData.length;

i+)newFlagsi=flagsi;

if(deDataiattrIndex!

=child.getValue()newFlagsi=false;

/设置子结点待分类的属性集LinkedHashSetnewAttributes=newLinkedHashSet();

for(Stringattr:

attributes)newAttributes.add(attr);

/在子结点上递归生成决策树buildDecisionTree(child,deData,newFlags,newAttributes,attrIndexMap);

/输出决策树publicvoidprintDecisionTree()publicclassDeTreepublicstaticvoidmain(Stringargs)/*/输入数据集1StringdeData=newString12;

deData0=newStringYes,No,No,Yes,Some,high,No,Yes,French,010,Yes;

deData1=newStringYes,No,No,Yes,Full,low,No,No,Thai,3060,No;

deData2=newStringNo,Yes,No,No,Some,low,No,No,Burger,010,Yes;

deData3=newStringYes,No,Yes,Yes,Full,low,Yes,No,Thai,1030,Yes;

deData4=newStringYes,No,Yes,No,Full,high,No,Yes,French,60,No;

deData5=newStringNo,Yes,No,Yes,Some,middle,Yes,Yes,Italian,010,Yes;

deData6=newStringNo,Yes,No,No,None,low,Yes,No,Burger,010,No;

deData7=newStringNo,No,No,Yes,Some,middle,Yes,Yes,Thai,010,Yes;

deData8=newStringNo,Yes,Yes,No,Full,low,Yes,No,Burger,60,No;

deData9=newStringYes,Yes,Yes,Yes,Full,high,No,Yes,Italian,1030,No;

deData10=newStringNo,No,No,No,None,low,No,No,Thai,010,No;

deData11=newStringYes,Yes,Yes,Yes,Full,low,No,No,Burger,3060,Yes;

/待分类的属性集1Stringattr=newStringalt,bar,fri,hun,pat,price,rain,res,type,est;

*/输入数据集2StringdeData=newString14;

deData0=newStringyouth,high,no,fair,no;

deData1=newStringyouth,high,no,excellent,no;

deData2=newStringmiddle_aged,high,no,fair,yes;

deData3=newStringsenior,medium,no,fair,yes;

deData4=newStringsenior,low,yes,fair,yes;

deData5=newStringsenior,low,yes,excellent,no;

deData6=newStringmiddle_aged,low,yes,excellent,yes;

deData7=newStringyouth,medium,no,fair,no;

deData8=newStringyouth,low,yes,fair,yes;

deData9=newStringsenior,medium,yes,fair,yes;

deData10=newStringyouth,medium,yes,excellent,yes;

deData11=newStringmiddle_aged,medium,no,excellent,yes;

deData12=newStringmiddle_aged,high,yes,fair,yes;

deData13=newStringsenior,medium,no,excellent,no;

/待分类的属性集2Stringattr=newStringage,income,student,credit_rating;

LinkedHashSetattributes=newLinkedHashSet();

iattr.length;

i+)attributes.add(attri);

/属性与数据集中对应数据的下标HashMapattrIndexMap=newHashMap();

i+)attrIndexMap.put(attri,i);

/需要分类的数据,初始为整个数据集booleanflags=newbooleandeData.length;

i+)flagsi=true;

/构造决策树TreeNoderoot=newTreeNode();

DecisionTreedecisionTree=newDecisionTree(root);

decisionTree.buildDecisionTree(root,deData,flags,attributes,attrIndexMap);